|

The aim would not be to go wild, but to get something very close to the arrangement and content seen in the real-time viewport. And all consistent between images, enough to satisfy even the most fersnickety regular comics reader. PNG file, ready to drop over a 2D backplate in Photoshop. All output by the AI to the usual masked.

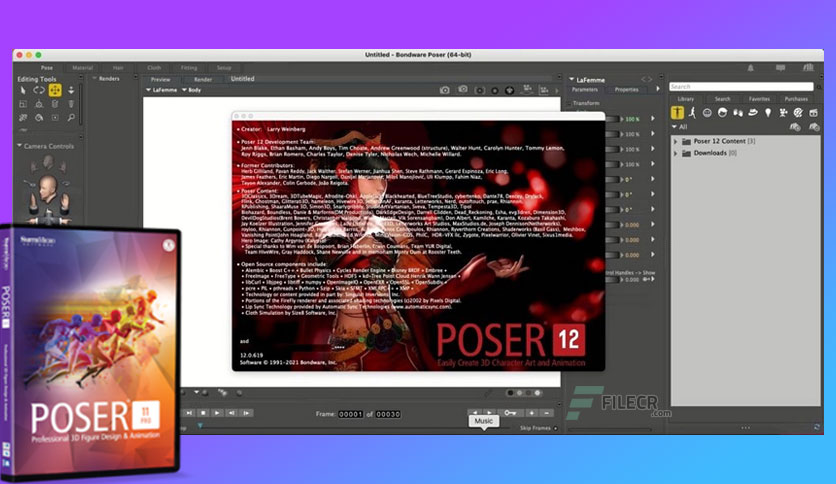

Face, expression, pose, clothes, camera-angle. But what if it was all neatly integrated into Poser, and ran on SDXL? All those royalty-free runtime assets then become super-valuable, since with their aid you can easily get the AI to do exactly what you want. You can kind of do all this now, outside of Poser, using Poser renders. Then, the AI image generation would be done. Perhaps there might be some on-the-fly LoRA training going on too, behind the scenes. Auto-analysis of a quick real-time render from the Viewport might be needed (already here, elsewhere) and auto-prompt construction (already here). The aim would be to keep all character details consistent and stable, while also ‘AI rendering’ the viewport into a consistent professional ‘art style’. Imagine an AI that takes what you see in the Poser viewport, and works on that, giving you 98% consistent character renders which would allow the creation of graphic novels etc. Poser could be the ‘killer app’ in creative AI, in terms of usable graphics production for storytelling.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed